Maximum number of function evaluations allowed. It is optional for the medium-scale method. You must provide the gradient to use the large-scale method. See the description of fun above to see how to define the gradient in fun. Gradient for the objective function defined by user. 'off' displays no output 'iter' displays output at each iteration 'final' (default) displays just the final output.

Print diagnostic information about the function to be minimized. These parameters are used by both the medium-scale and large-scale algorithms: Use medium-scale algorithm when set to 'off'. Use large-scale algorithm if possible when set to 'on'. For fmincon, you must provide the gradient (see the description of fun above to see how) or else the medium-scale algorithm is used: It is only a preference since certain conditions must be met to use the large-scale algorithm. We start by describing the LargeScale option since it states a preference for which algorithm to use. See Optimization Parameters, for detailed information. Some parameters apply to all algorithms, some are only relevant when using the large-scale algorithm, and others are only relevant when using the medium-scale algorithm.You can use optimset to set or change the values of these fields in the parameters structure, options. Optimization options parameters used by fmincon. the gradient projected onto the nullspace of Aeq). Measure of first-order optimality (large-scale algorithm only).įor large-scale bound constrained problems, the first-order optimality is the infinity norm of v.*g, where v is defined as in Box Constraints, and g is the gradient.įor large-scale problems with only linear equalities, the first-order optimality is the infinity norm of the projected gradient (i.e. Number of PCG iterations (large-scale algorithm only).įinal step size taken (medium-scale algorithm only). Structure containing information about the optimization. Structure containing the Lagrange multipliers at the solution x (separated by constraint type). The function did not converge to a solution. The maximum number of function evaluations or iterations was exceeded. This section provides function-specific details for exitflag, lambda, and output: Options provides the function-specific details for the options parameters.įunction Arguments contains general descriptions of arguments returned by fmincon. Likewise, if ceq has p components, the gradient GCeq of ceq(x) is an n-by- p matrix, where GCeq(i,j) is the partial derivative of ceq(j) with respect to x(i) (i.e., the jth column of GCeq is the gradient of the jth equality constraint ceq(j)). If nonlcon returns a vector c of m components and x has length n, where n is the length of x0, then the gradient GC of c(x) is an n-by- m matrix, where GC(i,j) is the partial derivative of c(j) with respect to x(i) (i.e., the jth column of GC is the gradient of the jth inequality constraint c(j)). The function that computes the nonlinear inequality constraints c(x) 2 % nonlcon called with 4 outputs The Hessian is by definition a symmetric matrix. That is, the ( i, j)th component of H is the second partial derivative of f with respect to x i and x j. The Hessian matrix is the second partial derivatives matrix of f at the point x. % Gradient of the function evaluated at x If nargout > 1 % fun called with two output arguments % Compute the objective function value at x Note that by checking the value of nargout we can avoid computing H when fun is called with only one or two output arguments (in the case where the optimization algorithm only needs the values of f and g but not H).į =. If the Hessian matrix can also be computed and the Hessian parameter is 'on', i.e., options = optimset('Hessian','on'), then the function fun must return the Hessian value H, a symmetric matrix, at x in a third output argument. That is, the ith component of g is the partial derivative of f with respect to the ith component of x.

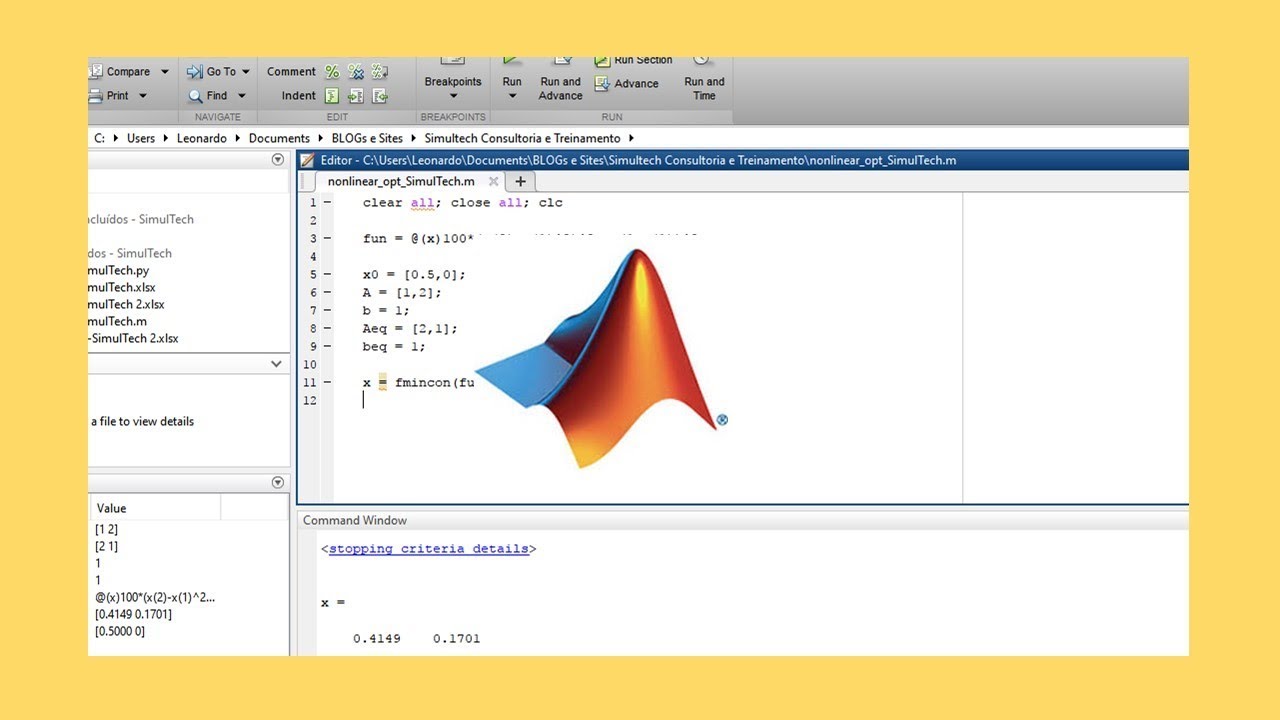

The gradient consists of the partial derivatives of f at the point x. Starts at x0 and finds a minimum x to the function described in fun subject to the linear inequalities A*x 1 % fun called with two output arguments This is generally referred to as constrained nonlinear optimization or nonlinear programming. = fmincon(.)įmincon finds a constrained minimum of a scalar function of several variables starting at an initial estimate. X = fmincon(fun,x0,A,b,Aeq,beq,lb,ub,nonlcon,options,P1,P2. X = fmincon(fun,x0,A,b,Aeq,beq,lb,ub,nonlcon,options) X = fmincon(fun,x0,A,b,Aeq,beq,lb,ub,nonlcon) f(x), c(x), and ceq(x) can be nonlinear functions. Where x, b, beq, lb, and ub are vectors, A and Aeq are matrices, c(x) and ceq(x) are functions that return vectors, and f(x) is a function that returns a scalar. Fmincon (Optimization Toolbox) Optimization Toolboxįind a minimum of a constrained nonlinear multivariable function

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed